Targeted Superlearning

In IPD meta-analysis, univariate moderator analyses are commonly used to identify patients with lower or higher treatment effects. However, their ability to do so may often be limited. The true mechanism that determines how much patients benefit from a psychological intervention is usually unknown; but it will likely depend on a complex interplay of multiple variables that is not easily captured by a simple treatment-covariate interaction. In this sense, even the approaches used in Articles 3 and 4 to model individualized treatment benefits are still fairly restricted: they assume that HTE can be sufficiently explained by one pre-defined parametric model, or a single data-adaptive algorithm.

To this end, in Article 6 (“Estimating Effect Variability Using Targeting Superlearning”), I explore HTE with models that can detect more complex treatment-covariate interactions, based on the targeted superlearning framework (Naimi & Balzer, 2018; Phillips et al., 2023; Polley et al., 2011). I employ this approach in a comprehensive IPD database of preventive intervention trials for subthreshold depression, and examine effects on depressive symptom severity up to 24 months. This section provides some further background and technical details on this methodology, as well as on ancillary software developed as part of the study. The same technical descriptions may also be found, along with other details, in the preregistration of the analysis (Harrer, Sprenger, et al., 2023).

Superlearning (also known as stacked regression; Breiman, 1996) is an ensemble machine learning method that combines multiple prediction algorithms based on their performance in a data set, which is determined using V-fold cross-validation. Prediction algorithms employed in a superlearner typically include both simple linear regression models as well as more “aggressive”, data-adaptive algorithms (e.g., boosting models, support vector machines, neural nets). By combining multiple learners, a superlearner has been shown to asymptotically perform as well as or better than the best-performing individual algorithm included in the framework (“oracle” property; Van der Laan et al., 2007). This method has also been found to be robust across various contexts, and even in very small samples (Montoya et al., 2022; Polley et al., 2011). A key motivation behind superlearning is that the true data-generating process that produced some observed data is often unknown but may be complex, where the framework ensures that the ensemble will converge with the best possible solution for the learning task at hand.

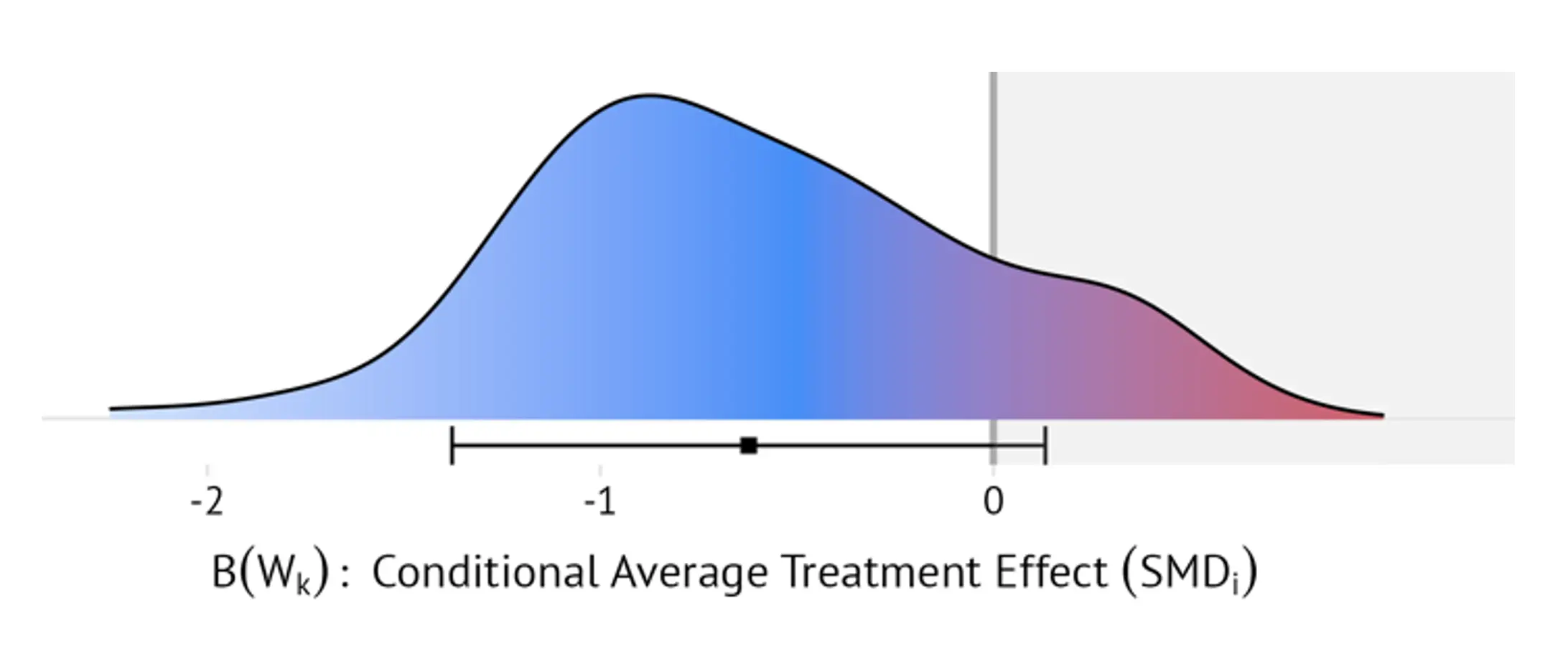

In a treatment setting, the superlearner framework can also be combined with targeted maximum likelihood estimation (TMLE) (Van Der Laan & Rubin, 2006) to estimate optimal dynamic treatment rules (ODTRs) (Murphy, 2003). This ODTR assigns each patient to the optimal (i.e., most effective) treatment based on their pre-test values. This is typically achieved by first estimating the “blip function” (Robins, 2004). This function uses a patient’s set of pre-test values as input and then returns an individualized treatment effect estimate (the “blip”), as derived by the superlearner. While often used as an intermediary step to derive the ODTR, blip distributions themselves can also be of substantive interest (Montoya et al., 2022). For instance, they allow to examine how much patient benefits may vary within a study, who benefits most, and which patients may experience negative effects (i.e., fare worse under treatment than under control).

For each study, targeted superlearning was therefore used to estimate the blip distribution across all patients in the trial. Since superlearners were constructed separately for each study, the maximum amount of baseline information included in the IPD database could be used. A broad selection of candidate algorithms was considered within the ensemble, ranging from linear models to more complex machine learning architectures (see Table 1 in Harrer, Sprenger, et al., 2023, for an overview). The distribution of blips, expressed as patient-specific standardized mean differences (SMD), was then presented visually to gauge how strongly individual effects may differ within and across studies. Based on the blips, I also calculated the meta-analytic proportion of patients who experience (i) negative effects, or (ii) only clinically negligible benefits of the preventive interventions (defined as individualized SMDs < 0.24; Cuijpers et al., 2014). Lastly, I constructed an interactive web app that uses the estimated blips to generate individualized meta-analytic effect estimates for specific populations or individuals (e.g., women under the age of 40 with PHQ-9 scores below 6, or any other combination of patient characteristics that were assessed across studies).

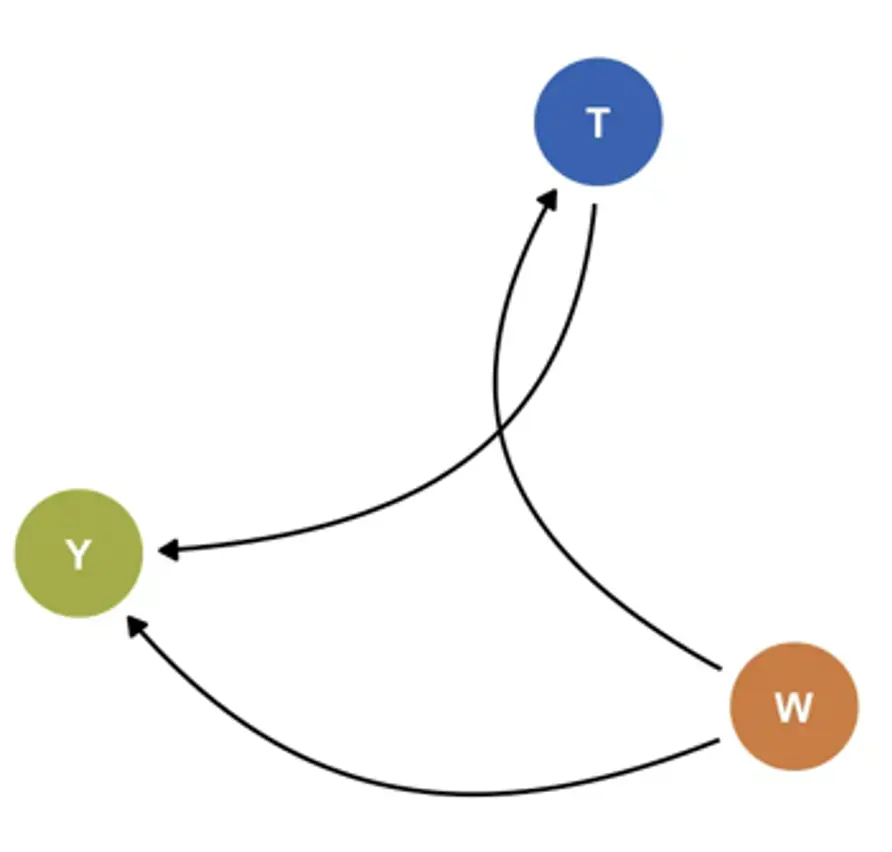

I now provide a more technical elaboration of the approach3With slight adaptations, I follow the notation used in Montoya et al. (2022, 2023).. Assume that the individual participant data $\mathcal{D}_k$ provided by each trial $k$ consists of three components: (i) the vector of baseline covariates $W_k$ collected in the trial, (ii) the (binary) treatment $T_k$, and (iii) the outcome of interest $Y_k$; in our case, patients’ depressive symptom severity. We say that $\mathcal{D}_k=\left(W_k,T_k,Y_k\right)$ with $\mathcal{D}_k\sim P_k$, where $P_k$ is the true data distribution underlying the observed data. Let $\mathcal{M}_k$ denote a structural causal model of $P_k$ (Pearl, 2009), where $U_k=\left(U_{Wk},U_{Tk},U_{Yk}\right)$ are exogenous (i.e., unobserved) variables. The relationship between the observed and unobserved variables can then be expressed through three structural equations (Díaz Muñoz & van der Laan, 2011; Montoya et al., 2022, 2023):

Where $f_{Wk}$ and ${f}_{Yk}$ are unknown functions. According to this model, $Y_k$ is a function of the measured covariates $W_k$, unmeasured variables $U_{Yk}$, and treatment $T_k$. Since $k$ is a randomized trial, it is directly defined by $U_{Tk}$, which has a known distribution: $U_{Tk}\sim\mathrm{Bern}\left(p\right)$, where $p$= 0.5 in most cases. Based on this system of data-generating equations, the density of $\mathcal{D}_k$ can be factorized as:

Where $p_{Wk}$ is the density of $W_k$, $g_k$ is the treatment assignment mechanism, and $p_{Yk}$ is the conditional density of $Y_k$ given $T_k$ and $W_k$. Since $k$ is an RCT, $g_k$ is known and the positivity assumption $\textsf{P}\left(\textsf{min}\ g_k\left(T_k=t\middle| W_k\right)>\textsf{0} \right)=\textsf{1}$ with $t\in {\textsf{0 = control, 1 = treatment}}$ is known to hold. Also, define $Q_k\left(t,w\right)$ as the conditional expected value of $Y_k$ given $T_k$ and $W_k$; that is $Q_k\left(t,w\right) = \mathbb{E}\left[Y_k\middle| T_k=t,W_k=w\right]$. The blip function $B_k\left(W_k\right)$ can be derived from these conditional expectations by alternatingly setting $t=1$ and $t=0$ while $w$ is held constant:

This definition shows that the blip as analogous to the conditional average treatment effect (CATE) (Vegetabile, 2021); the counterfactual treatment effect for some individuals with identical covariate values $W_k$. The blip function is approximated by first estimating $Q_k\left(T_k,W_k\right)$ and $g_k\left(T_k\middle| W_k\right)$ using the superlearner. Then, the Augmented-Inverse Probability Weighted (AIPW) estimator (Glynn & Quinn, 2010) is used to estimate the double-robust pseudo-outcome $D_k\left(Q_k,g_k\right)$:

For RCTs, this doubly robust estimator guarantees that $\mathbb{E}\left[D_k\left(Q_k,g_k\right)\middle| W_k\right]=B\left(W_k\right)$. The blip function is then estimated using the superlearner by regressing estimates of $D_k\left(Q_k,g_k\right)$ on $W_k$. This provides an estimate of the individualized treatment effect (i.e., blip) for each person i in trial k based on their covariates $W_{ik}$. We denote this individualized treatment effect estimate as $B_{n,k}\left(W_{ik}\right)$. Once estimated for all studies, **ridgeline plots** displaying the densities of $B_{n,k}\left(W_{ik}\right)$ within and across trials can be created (see Figure 15 for an illustration).

I also estimated the proportion of patients with negative and clinically negligible effects, defined as $\frac{1}{N} \sum_{i=1}^{N} \mathbb{I}\left[ B_{n,k}(W_{ik}) > 0 \right]$ and $\mathbb{I}\left[ B_{n,k}(W_{ik}) > -0.24 \right]$, respectively, and pooled them using a random-effects model. Furthermore, I develop a web application that allows to examine the blip distributions in greater detail (metapsy.dev/prevdep-explorer). Along with other functionality, this effect explorer allows users to calculate adjusted treatment effects for specific patient groups (e.g., patients whose PHQ-9 values and age fall within a certain range, patients who take antidepressants, etc.) based on the estimated blip functions. To do this, in each study, the application regresses all values of $B_{n,k}\left(W_{ik}\right)$ on the set of moderators that have been assessed consistently in all or most trials:

where $x_{ikj}\in W_{ik}$ is person $i$’s value on one of the $j=1,\ldots,J$ variables that are assessed across studies. As with the last superlearner step, a side-effect of using $B_{n,k}\left(W_{ik}\right)$ as the response is that the coefficients $\gamma_{kj}$ directly estimate treatment-covariate interactions, while the need to estimate main effects is avoided (Kessler et al., 2019). For each study, the fitted model $f_k\left(X_i;{\hat{\gamma}}_k\right)$ can then be used to obtain an adjusted estimate of the average treatment effect via regression standardization:

where $X^{\textsf{new}}$ is a “counterfactual” data grid that contains one replicate of the data for every predictor value combination of interest. It should be noted that Equation (23) will often be a substantially simpler model than the original blip superlearner. However, this approach leads to easily interpretable estimates of treatment-covariate interactions based on the estimated blips, allows for a straightforward calculation of the variance of ${\hat{\mu}}_k$ using the delta method, and ensures that adjusted marginal estimates can be obtained relatively fast across all included trials, which facilitates the deployment in a web application. Once ${\hat{\mu}}_k$ and its sampling variance has been obtained for each study using this method, a pooled random-effects estimate and its between-study heterogeneity variance $\tau^2$ can be calculated and displayed within the application.